In February 2025, the California State University system, the largest public higher education system in the United States, announced it had reached an agreement with industry and the state government to make AI tools, training, and teaching and learning opportunities available to all 23 CSU campuses. According to the press release, the CSU system will ensure “the system’s more than 460,000 students and 63,000 faculty and staff have equitable access to cutting-edge tools that will prepare them to meet the rapidly changing education and workforce needs of California.”

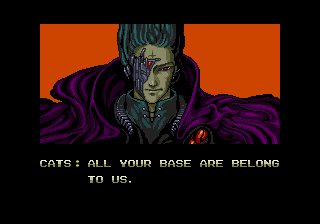

Phrases like “AI-ready,” “empowered workforce,” and “skilled graduates ready to meet the demands of California’s future economy” paint a particular picture… that reminds me of batteries in The Matrix.

The word “democratizing” showing up in the press release doesn’t dissolve this picture I’m forming in my mind.

Statements from the CSU AI Workforce Acceleration Board members in the press release are an interesting curatorial decision. All industry representatives. All telling us how the “unprecedented” alliance is an “honor” that promises to make the work of teaching and learning more “efficient,” more “future ready.” Confidence. Bright hope for the future. It all points to a future that’s already here. And we are meeting it.

The batteries are still there in my mind’s eye.

Looking at the CSU AI Workforce Acceleration Board page, I do see faculty members and students. A couple of representatives from the Academic Senate of the CSU (ASCSU). A handful of administrators from the CSU Office of the Chancellor. A few from a small number of CSU campuses. The president of the Cal State Students Association (CSSA). One student. Batteries.

I recently participated in an effort to understand what students and faculty think about their needs in understanding AI, what they think an AI literate person ought to know and do, and how knowledge and skills about AI relate to their academic work. I had talked to faculty about AI and their teaching. I also talked to students about their AI use in academic work, including the work they anticipate to do after graduation.

One interaction has stayed with me. The student talked about her use of AI to develop outlines and brainstorm ideas. She then expressed her awareness of how her peers use AI.

I use it, but only for this and this. Not like them.

I recognized the move. It had the texture of intentional distance. Considering the context of uncertainty, the awareness of environmental and societal concerns, it was a tell.

Combined with the CSU press release and what I perceived in that room with that student, I noticed not just the batteries. I recognized the transaction.

This is the manufactured inevitability. They’re not selling to us. We’ve been bought.

Leave a Reply